Proxy Harvester

Harvest and Test Proxies

If you need to find and test proxies, then ScrapeBox has a powerful proxy harvester and tester built in. Many automation tools including ScrapeBox have the ability to use multiple proxies for performing tasks such as Harvesting Urls from search engines, when Creating Backlinks, or Scraping Emails just to name a few.

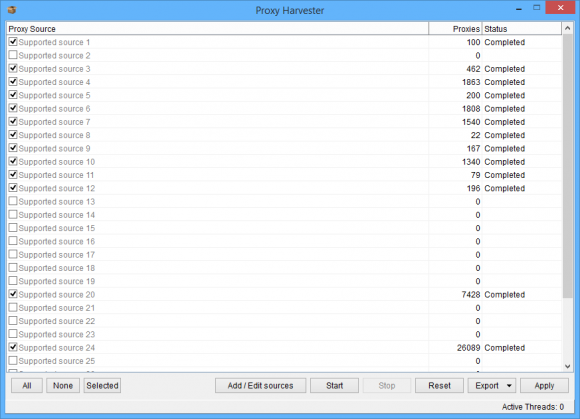

Many websites publish daily lists of proxies for you to use, you could manually visit these sites and copy the lists in to another tool and test them, then copy the list of working proxies to the tool you finally want to use them in… But the ScrapeBox Proxy Manager offers a far simpler solution. It has 22 proxy sources already built in, plus it allows you to add custom sources by adding the URL’s of any sites that publish proxies.

Then when you run the Proxy Harvester, it will visit each website and extract all the proxies from the pages and automatically remove the duplicate proxies that may be published on multiple web sites. So with one click you can pull in thousands of proxies from numerous websites.

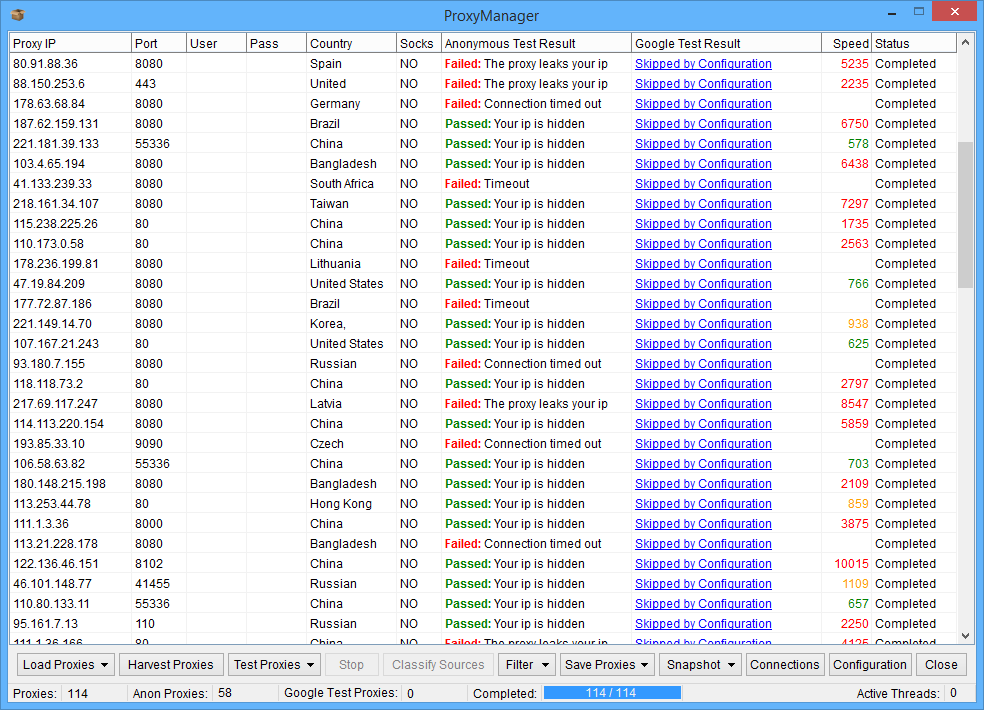

Next the proxy tester can also run numerous checks on the proxies you scraped. There’s options for removing or only keeping proxies with specific ports, keeping or removing proxies from specific countries, you can mark proxies as socks and you can also test private proxies which require a username and password to authenticate.

Also the proxy tester is multi-threaded, so you can adjust the number of simultaneous connections to use while testing and also set the connection timeout. It also has the ability to test if proxies are working with Google by conducting a search query on Google and seeing if search results are returned.

This way you can filter proxies for use when harvesting URL’s from Google.

Or you can use the “Custom Test” option, which you can see here on the configuration settings. Where you can add any URL you want the proxy tester to check against such as Craigslist, and specify something on the webpage to check for to know if the proxy is working such as a unique piece of text or HTML.

Once the proxy testing is completed, you have numerous options such being able to retest failed proxies, retest any proxies not checked so you can stop and re-start where you left off at any time or you can highlight and retest specific proxies. You also have the ability to sort proxies by all fields like IP address, Port number and speed.

To clean up your proxy list when done you can filter proxies by speed and only keep the fastest proxies, keep only anonymous proxies or keep only Google passed proxies. Then when done they can be saved to a text file or used in ScrapeBox.

When done the Proxy Tester can even send you an email to let you know your proxies are ready!

Also many users have setup ScrapeBox as a dedicated proxy harvester and tester by using our Automator Plugin. With this ScrapeBox can run in a loop around the clock harvesting and testing proxies at set time intervals and saving the good proxies to file so there’s fresh proxies always available for ScrapeBox and their other internet marketing or SEO tools.

Harvest Proxies

Proxy Harvester comes preloaded with a number of proxy sources which publish daily proxy lists, and you are free to add your own sites.

Proxy Harvester comes preloaded with a number of proxy sources which publish daily proxy lists, and you are free to add your own sites.

So whenever you need to find working proxies, you can scan either the included sources or your own proxy sources in order to locate and extract proxies from the internet.

You can also classify proxy sources, so when you test the proxies ScrapeBox can remember what proxies came from what source.

Then you can display metrics on how many proxies a sources returned, and what percentage of those proxies were working and what percentage work with Google.

So if you have a huge list of sources and you don’t know what ones do work, what don’t and what have not been updated? ScrapeBox can classify your source lists and give metrics on the most productive.

Trainable Sources

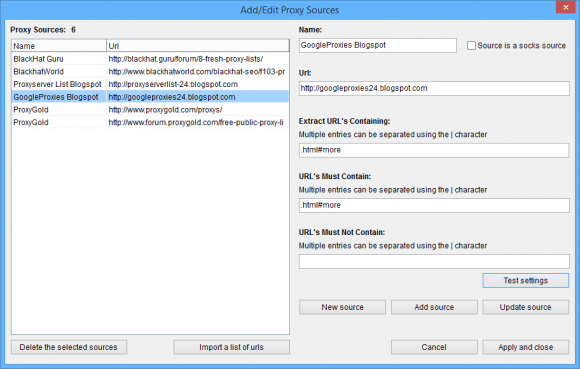

Trainable proxy scanner means you can fully configure where you want to scrape proxies from.

Trainable proxy scanner means you can fully configure where you want to scrape proxies from.

Also you have the ability to extract links from pages, and then find proxies on the extracted links.

Why is this so useful?

You can add the index of a proxy forum, or a proxy blog and then ScrapeBox can fetch all the forum posts or blog post and drill down in to each page extracting the proxies published on each.

This gives it the ability to extract hundreds of thousands of proxies from just a single source.

There’s also a handy “Test” feature which you can see here so you can check what URL’s will be extracted, and then what proxies will be extracted from those individual pages. It makes training and configuring the source scraper a breeze.

Proxy Harvester Tutorial

View our video tutorial showing the Proxy Harvester in action. This feature is included with ScrapeBox, and is also compatible with our Automator Plugin.

We have hundreds of video tutorials for ScrapeBox.

View YouTube Channel